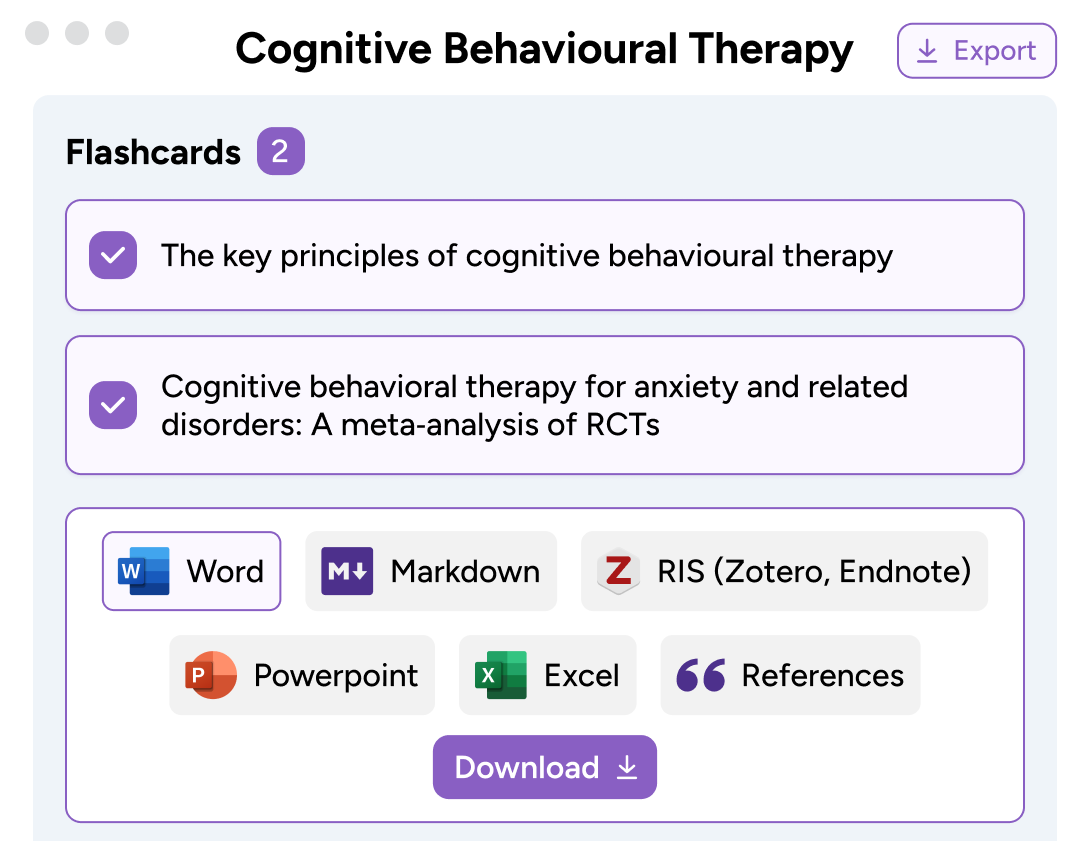

Extract key information from research papers with our AI summarizer.

Get a snapshot of what matters – fast . Break down complex concepts into easy-to-read sections. Skim or dive deep with a clean reading experience.

Summarize, analyze, and organize your research in one place.

Features built for scholars like you, trusted by researchers and students around the world.

Summarize papers, PDFs, book chapters, online articles and more.

Easy import

Drag and drop files, enter the url of a page, paste a block of text, or use our browser extension.

Enhanced summary

Change the summary to suit your reading style. Choose from a bulleted list, one-liner and more.

Read the key points of a paper in seconds with confidence that everything you read comes from the original text.

Clean reading

Clutter free flashcards help you skim or diver deeper into the details and quickly jump between sections.

Highlighted key terms and findings. Let evidence-based statements guide you through the full text with confidence.

Summarize texts in any format

Scholarcy’s ai summarization tool is designed to generate accurate, reliable article summaries..

Our summarizer tool is trained to identify key terms, claims, and findings in academic papers. These insights are turned into digestible Summary Flashcards.

Scroll in the box below to see the magic ⤸

The knowledge extraction and summarization methods we use focus on accuracy. This ensures what you read is factually correct, and can always be traced back to the original source .

What students say

It would normally take me 15mins – 1 hour to skim read the article but with Scholarcy I can do that in 5 minutes.

Scholarcy makes my life easier because it pulls out important information in the summary flashcard.

Scholarcy is clear and easy to navigate. It helps speed up the process of reading and understating papers.

Join over 400,000 people already saving time.

From a to z with scholarcy, generate flashcard summaries. discover more aha moments. get to point quicker..

Understand complex research. Jump between key concepts and sections. Highlight text. Take notes.

Build a library of knowledge. Recall important info with ease. Organize, search, sort, edit.

Bring it all together. Export Flashcards in a range of formats. Transfer Flashcards into other apps.

Apply what you’ve learned. Compile your highlights, notes, references. Write that magnum opus 🤌

Go beyond summaries

Get unlimited summaries, advanced research and analysis features, and your own personalised collection with Scholarcy Library!

With Scholarcy Library you can import unlimited documents and generate summaries for all your course materials or collection of research papers.

Scholarcy Library offers additional features including access to millions of academic research papers, customizable summaries, direct import from Zotero and more.

Scholarcy lets you build and organise your summaries into a handy library that you can access from anywhere. Export from a range of options, including one-click bibliographies and even a literature matrix.

Compare plans

Summarize 3 articles a day with our free summarizer tool, or upgrade to Scholarcy Library to generate and save unlimited article summaries.

Import a range of file formats

Export flashcards (one at a time)

Everything in Free

Unlimited summarization

Generate enhanced summaries

Save your flashcards

Take notes, highlight and edit text

Organize flashcards into collections

Frequently Asked Questions

How do i use scholarcy, what if i’m having issues importing files, can scholarcy generate a plain language summary of the article, can scholarcy process any size document, how do i change the summary to get better results, what if i upload a paywalled article to scholarcy, is it violating copyright laws.

Summarize any | in a click.

TLDR This helps you summarize any piece of text into concise, easy to digest content so you can free yourself from information overload.

Enter an Article URL or paste your Text

Browser extensions.

Use TLDR This browser extensions to summarize any webpage in a click.

Single platform, endless summaries

Transforming information overload into manageable insights — consistently striving for clarity.

100% Automatic Article Summarization with just a click

In the sheer amount of information that bombards Internet users from all sides, hardly anyone wants to devote their valuable time to reading long texts. TLDR This's clever AI analyzes any piece of text and summarizes it automatically, in a way that makes it easy for you to read, understand and act on.

Article Metadata Extraction

TLDR This, the online article summarizer tool, not only condenses lengthy articles into shorter, digestible content, but it also automatically extracts essential metadata such as author and date information, related images, and the title. Additionally, it estimates the reading time for news articles and blog posts, ensuring you have all the necessary information consolidated in one place for efficient reading.

- Automated author-date extraction

- Related images consolidation

- Instant reading time estimation

Distraction and ad-free reading

As an efficient article summarizer tool, TLDR This meticulously eliminates ads, popups, graphics, and other online distractions, providing you with a clean, uncluttered reading experience. Moreover, it enhances your focus and comprehension by presenting the essential content in a concise and straightforward manner, thus transforming the way you consume information online.

Avoid the Clickbait Trap

TLDR This smartly selects the most relevant points from a text, filtering out weak arguments and baseless speculation. It allows for quick comprehension of the essence, without needing to sift through all paragraphs. By focusing on core substance and disregarding fluff, it enhances efficiency in consuming information, freeing more time for valuable content.

- Filters weak arguments and speculation

- Highlights most relevant points

- Saves time by eliminating fluff

Who is TLDR This for?

TLDR This is a summarizing tool designed for students, writers, teachers, institutions, journalists, and any internet user who needs to quickly understand the essence of lengthy content.

Anyone with access to the Internet

TLDR This is for anyone who just needs to get the gist of a long article. You can read this summary, then go read the original article if you want to.

TLDR This is for students studying for exams, who are overwhelmed by information overload. This tool will help them summarize information into a concise, easy to digest piece of text.

TLDR This is for anyone who writes frequently, and wants to quickly summarize their articles for easier writing and easier reading.

TLDR This is for teachers who want to summarize a long document or chapter for their students.

Institutions

TLDR This is for corporations and institutions who want to condense a piece of content into a summary that is easy to digest for their employees/students.

Journalists

TLDR This is for journalists who need to summarize a long article for their newspaper or magazine.

Featured by the world's best websites

Our platform has been recognized and utilized by top-tier websites across the globe, solidifying our reputation for excellence and reliability in the digital world.

Focus on the Value, Not the Noise.

- All eBooks & Audiobooks

- Academic eBook Collection

- Home Grown eBook Collection

- Off-Campus Access

- Literature Resource Center

- Opposing Viewpoints

- ProQuest Central

- Course Guides

- Citing Sources

- Library Research

- Websites by Topic

- Book-a-Librarian

- Research Tutorials

- Use the Catalog

- Use Databases

- Use Films on Demand

- Use Home Grown eBooks

- Use NC LIVE

- Evaluating Sources

- Primary vs. Secondary

- Scholarly vs. Popular

- Make an Appointment

- Writing Tools

- Annotated Bibliographies

- Summaries, Reviews & Critiques

- Writing Center

Service Alert

Article Summaries, Reviews & Critiques

Writing an article summary.

- Writing an article REVIEW

- Writing an article CRITIQUE

- Citing Sources This link opens in a new window

- About RCC Library

Text: 336-308-8801

Email: [email protected]

Call: 336-633-0204

Schedule: Book-a-Librarian

Like us on Facebook

Links on this guide may go to external web sites not connected with Randolph Community College. Their inclusion is not an endorsement by Randolph Community College and the College is not responsible for the accuracy of their content or the security of their site.

When writing a summary, the goal is to compose a concise and objective overview of the original article. The summary should focus only on the article's main ideas and important details that support those ideas.

Guidelines for summarizing an article:

- State the main ideas.

- Identify the most important details that support the main ideas.

- Summarize in your own words.

- Do not copy phrases or sentences unless they are being used as direct quotations.

- Express the underlying meaning of the article, but do not critique or analyze.

- The summary should be about one third the length of the original article.

Your summary should include:

- Give an overview of the article, including the title and the name of the author.

- Provide a thesis statement that states the main idea of the article.

- Use the body paragraphs to explain the supporting ideas of your thesis statement.

- One-paragraph summary - one sentence per supporting detail, providing 1-2 examples for each.

- Multi-paragraph summary - one paragraph per supporting detail, providing 2-3 examples for each.

- Start each paragraph with a topic sentence.

- Use transitional words and phrases to connect ideas.

- Summarize your thesis statement and the underlying meaning of the article.

Adapted from "Guidelines for Using In-Text Citations in a Summary (or Research Paper)" by Christine Bauer-Ramazani, 2020

Additional Resources

All links open in a new window.

How to Write a Summary - Guide & Examples (from Scribbr.com)

Writing a Summary (from The University of Arizona Global Campus Writing Center)

- Next: Writing an article REVIEW >>

- Last Updated: Mar 15, 2024 9:32 AM

- URL: https://libguides.randolph.edu/summaries

- Grammar Checker

- Paraphrasing Tool

- Critique Report

- Writing Reports

- Learn Blog Grammar Guide Community Events FAQ

- Grammar Guide

How to Write a Summary (Examples Included)

By Ashley Shaw

Have you ever recommended a book to someone and given them a quick overview? Then you’ve created a summary before!

Summarizing is a common part of everyday communication. It feels easy when you’re recounting what happened on your favorite show, but what do you do when the information gets a little more complex?

Written summaries come with their own set of challenges. You might ask yourself:

- What details are unnecessary?

- How do you put this in your own words without changing the meaning?

- How close can you get to the original without plagiarizing it?

- How long should it be?

The answers to these questions depend on the type of summary you are doing and why you are doing it.

A summary in an academic setting is different to a professional summary—and both of those are very different to summarizing a funny story you want to tell your friends.

One thing they all have in common is that you need to relay information in the clearest way possible to help your reader understand. We’ll look at some different forms of summary, and give you some tips on each.

Let’s get started!

What Is a Summary?

How do you write a summary, how do you write an academic summary, what are the four types of academic summaries, how do i write a professional summary, writing or telling a summary in personal situations, summarizing summaries.

A summary is a shorter version of a larger work. Summaries are used at some level in almost every writing task, from formal documents to personal messages.

When you write a summary, you have an audience that doesn’t know every single thing you know.

When you want them to understand your argument, topic, or stance, you may need to explain some things to catch them up.

Instead of having them read the article or hear every single detail of the story or event, you instead give them a brief overview of what they need to know.

Academic, professional, and personal summaries each require you to consider different things, but there are some key rules they all have in common.

Let’s go over a few general guides to writing a summary first.

1. A summary should always be shorter than the original work, usually considerably.

Even if your summary is the length of a full paper, you are likely summarizing a book or other significantly longer work.

2. A summary should tell the reader the highlights of what they need to know without giving them unnecessary details.

3. It should also include enough details to give a clear and honest picture.

For example, if you summarize an article that says “ The Office is the greatest television show of all time,” but don’t mention that they are specifically referring to sitcoms, then you changed the meaning of the article. That’s a problem! Similarly, if you write a summary of your job history and say you volunteered at a hospital for the last three years, but you don’t add that you only went twice in that time, it becomes a little dishonest.

4. Summaries shouldn’t contain personal opinion.

While in the longer work you are creating you might use opinion, within the summary itself, you should avoid all personal opinion. A summary is different than a review. In this moment, you aren’t saying what you think of the work you are summarizing, you are just giving your audience enough information to know what the work says or did.

Now that we have a good idea of what summaries are in general, let’s talk about some specific types of summary you will likely have to do at some point in your writing life.

An academic summary is one you will create for a class or in other academic writing. The exact elements you will need to include depend on the assignment itself.

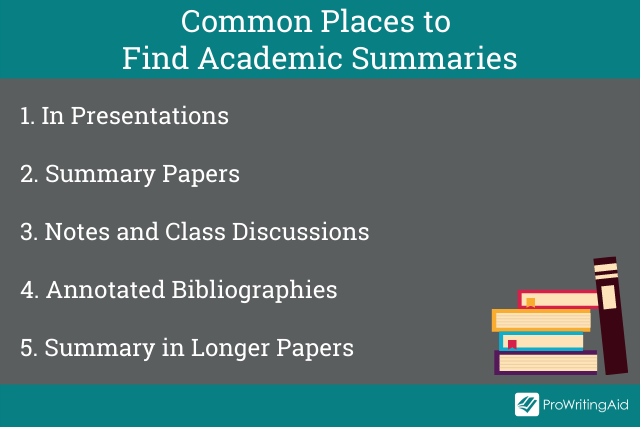

However, when you’re asked for an academic summary, this usually this means one of five things, all of which are pretty similar:

- You need to do a presentation in which you talk about an article, book, or report.

- You write a summary paper in which the entire paper is a summary of a specific work.

- You summarize a class discussion, lesson, or reading in the form of personal notes or a discussion board post.

- You do something like an annotated bibliography where you write short summaries of multiple works in preparation of a longer assignment.

- You write quick summaries within the body of another assignment . For example, in an argumentative essay, you will likely need to have short summaries of the sources you use to explain their argument before getting into how the source helps you prove your point.

Regardless of what type of summary you are doing, though, there are a few steps you should always follow:

- Skim the work you are summarizing before you read it. Notice what stands out to you.

- Next, read it in depth . Do the same things stand out?

- Put the full text away and write in a few sentences what the main idea or point was.

- Go back and compare to make sure you didn’t forget anything.

- Expand on this to write and then edit your summary.

Each type of academic summary requires slightly different things. Let’s get down to details.

How Do I Write a Summary Paper?

Sometimes teachers assign something called a summary paper . In this, the entire thing is a summary of one article, book, story, or report.

To understand how to write this paper, let’s talk a little bit about the purpose of such an assignment.

A summary paper is usually given to help a teacher see how well a student understands a reading assignment, but also to help the student digest the reading. Sometimes, it can be difficult to understand things we read right away.

However, a good way to process the information is to put it in our own words. That is the point of a summary paper.

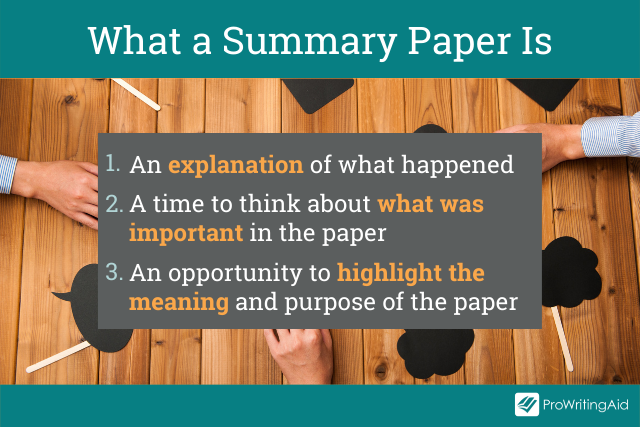

A summary paper is:

- A way to explain in our own words what happened in a paper, book, etc.

- A time to think about what was important in the paper, etc.

- A time to think about the meaning and purpose behind the paper, etc.

Here are some things that a summary paper is not:

- A review. Your thoughts and opinions on the thing you are summarizing don’t need to be here unless otherwise specified.

- A comparison. A comparison paper has a lot of summary in it, but it is different than a summary paper. In this, you are just saying what happened, but you aren’t saying places it could have been done differently.

- A paraphrase (though you might have a little paraphrasing in there). In the section on using summary in longer papers, I talk more about the difference between summaries, paraphrases, and quotes.

Because a summary paper is usually longer than other forms of summary, you will be able to chose more detail. However, it still needs to focus on the important events. Summary papers are usually shorter papers.

Let’s say you are writing a 3–4 page summary. You are likely summarizing a full book or an article or short story, which will be much longer than 3–4 pages.

Imagine that you are the author of the work, and your editor comes to you and says they love what you wrote, but they need it to be 3–4 pages instead.

How would you tell that story (argument, idea, etc.) in that length without losing the heart or intent behind it? That is what belongs in a summary paper.

How Do I Write Useful Academic Notes?

Sometimes, you need to write a summary for yourself in the form of notes or for your classmates in the form of a discussion post.

You might not think you need a specific approach for this. After all, only you are going to see it.

However, summarizing for yourself can sometimes be the most difficult type of summary. If you try to write down everything your teacher says, your hand will cramp and you’ll likely miss a lot.

Yet, transcribing doesn’t work because studies show that writing things down (not typing them) actually helps you remember them better.

So how do you find the balance between summarizing the lessons without leaving out important points?

There are some tips for this:

- If your professor writes it on the board, it is probably important.

- What points do your textbooks include when summarizing information? Use these as a guide.

- Write the highlight of every X amount of time, with X being the time you can go without missing anything or getting tired. This could be one point per minute, or three per five minutes, etc.

How Do I Create an Annotated Biography?

An annotated bibliography requires a very specific style of writing. Often, you will write these before a longer research paper . They will ask you to find a certain amount of articles and write a short annotation for each of them.

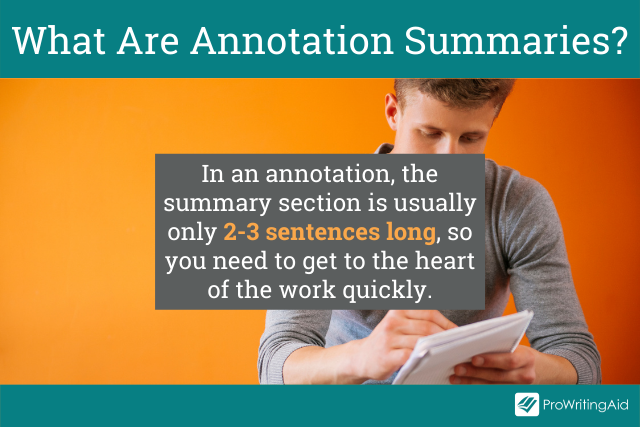

While an annotation is more than just a summary, it usually starts with a summary of the work. This will be about 2–3 sentences long. Because you don’t have a lot of room, you really have to think about what the most important thing the work says is.

This will basically ask you to explain the point of the article in these couple of sentences, so you should focus on the main point when expressing it.

Here is an example of a summary section within an annotation about this post:

“In this post, the author explains how to write a summary in different types of settings. She walks through academic, professional, and personal summaries. Ultimately, she claims that summaries should be short explanations that get the audience caught up on the topic without leaving out details that would change the meaning.”

Can I Write a Summary Within an Essay?

Perhaps the most common type of summary you will ever do is a short summary within a longer paper.

For example, if you have to write an argumentative essay, you will likely need to use sources to help support your argument.

However, there is a good chance that your readers won’t have read those same sources.

So, you need to give them enough detail to understand your topic without spending too much time explaining and not enough making your argument.

While this depends on exactly how you are using summary in your paper, often, a good amount of summary is the same amount you would put in an annotation.

Just a few sentences will allow the reader to get an idea of the work before moving on to specific parts of it that might help your argument.

What’s the Difference Between Summarizing, Paraphrasing, and Using Quotes?

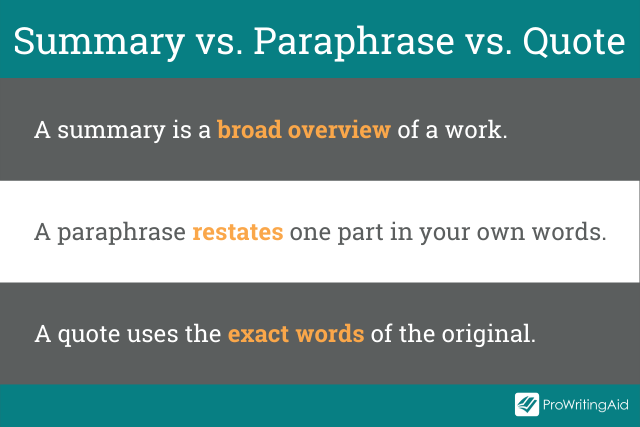

One important thing to recognize when using summaries in academic settings is that summaries are different than paraphrases or quotes.

A summary is broader and more general. A paraphrase, on the other hand, puts specific parts into your own words. A quote uses the exact words of the original. All of them, however, need to be cited.

Let’s look at an example:

Take these words by Thomas J. Watson:

”Would you like me to give you a formula for success? It’s quite simple, really. Double your rate of failure. You are thinking of failure as the enemy of success. But it isn’t as all. You can be discouraged by failure—or you can learn from it. So go ahead and make mistakes. Make all you can. Because, remember, that’s where you will find success.”

Let’s say I was told to write a summary, a paraphrase, and a quote about this statement. This is what it might look like:

Summary: Thomas J. Watson said that the key to success is actually to fail more often. (This is broad and doesn’t go into details about what he says, but it still gives him credit.)

Paraphrase: Thomas J. Watson, on asking if people would like his formula for success, said that the secret was to fail twice as much. He claimed that when you decide to learn from your mistakes instead of being disappointed by them, and when you start making a lot of them, you will actually find more success. (This includes most of the details, but it is in my own words, while still crediting the source.)

Quote: Thomas J. Watson said, ”Would you like me to give you a formula for success? It’s quite simple, really. Double your rate of failure. You are thinking of failure as the enemy of success. But it isn’t at all. You can be discouraged by failure—or you can learn from it. So go ahead and make mistakes. Make all you can. Because, remember, that’s where you will find success.” (This is the exact words of the original with quotation marks and credit given.)

Avoiding Plagiarism

One of the hardest parts about summarizing someone else’s writing is avoiding plagiarism .

That’s why I have a few rules/tips for you when summarizing anything:

1. Always cite.

If you are talking about someone else’s work in any means, cite your source. If you are summarizing the entire work, all you probably need to do (depending on style guidelines) is say the author’s name. However, if you are summarizing a specific chapter or section, you should state that specifically. Finally, you should make sure to include it in your Work Cited or Reference page.

2. Change the wording.

Sometimes when people are summarizing or paraphrasing a work, they get too close to the original, and actually use the exact words. Unless you use quotation marks, this is plagiarism. However, a good way to avoid this is to hide the article while you are summarizing it. If you don’t have it in front of you, you are less likely to accidentally use the exact words. (However, after you are done, double check that you didn’t miss anything important or give wrong details.)

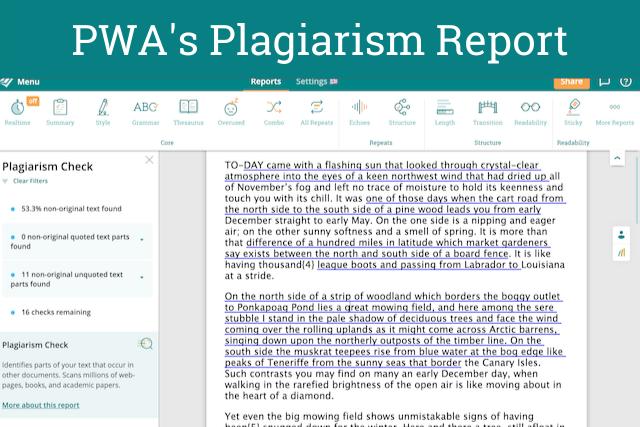

3. Use a plagiarism checker.

Of course, when you are writing any summary, especially academic summaries, it can be easy to cross the line into plagiarism. If this is a place where you struggle, then ProWritingAid can help.

Just use our Plagiarism Report . It’ll highlight any unoriginal text in your document so you can make sure you are citing everything correctly and summarizing in your own words.

Find out more about ProWritingAid plagiarism bundles.

Along with academic summaries, you might sometimes need to write professional summaries. Often, this means writing a summary about yourself that shows why you are qualified for a position or organization.

In this section, let’s talk about two types of professional summaries: a LinkedIn summary and a summary section within a resume.

How Do I Write My LinkedIn Bio?

LinkedIn is all about professional networking. It offers you a chance to share a brief glimpse of your professional qualifications in a paragraph or two.

This can then be sent to professional connections, or even found by them without you having to reach out. This can help you get a job or build your network.

Your summary is one of the first things a future employer might see about you, and how you write yours can make you stand out from the competition.

Here are some tips on writing a LinkedIn summary :

- Before you write it, think about what you want it to do . If you are looking for a job, what kind of job? What have you done in your past that would stand out to someone hiring for that position? That is what you will want to focus on in your summary.

- Be professional . Unlike many social media platforms, LinkedIn has a reputation for being more formal. Your summary should reflect that to some extent.

- Use keywords . Your summary is searchable, so using keywords that a recruiter might be searching for can help them find you.

- Focus on the start . LinkedIn shows the first 300 characters automatically, and then offers the viewer a chance to read more. Make that start so good that everyone wants to keep reading.

- Focus on accomplishments . Think of your life like a series of albums, and this is your speciality “Greatest Hits” album. What “songs” are you putting on it?

How Do I Summarize My Experience on a Resume?

Writing a professional summary for a resume is different than any other type of summary that you may have to do.

Recruiters go through a lot of resumes every day. They don’t have time to spend ages reading yours, which means you have to wow them quickly.

To do that, you might include a section at the top of your resume that acts almost as an elevator pitch: That one thing you might say to a recruiter to get them to want to talk to you if you only had a 30-second elevator ride.

If you don’t have a lot of experience, though, you might want to skip this section entirely and focus on playing up the experience you do have.

Outside of academic and personal summaries, you use summary a lot in your day-to-day life.

Whether it is telling a good piece of trivia you just learned or a funny story that happened to you, or even setting the stage in creative writing, you summarize all the time.

How you use summary can be an important consideration in whether people want to read your work (or listen to you talk).

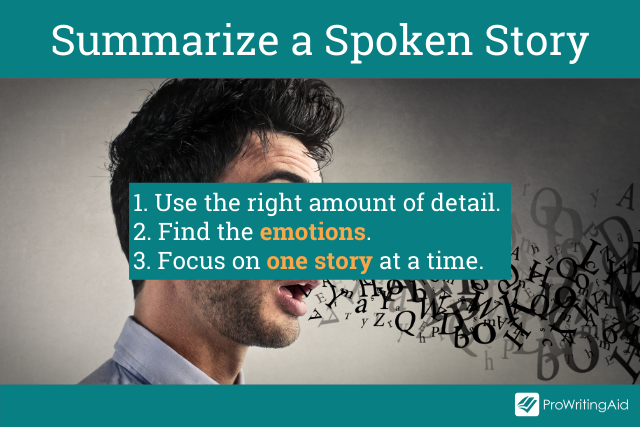

Here are some things to think about when telling a story:

- Pick interesting details . Too many and your point will be lost. Not enough, and you didn’t paint the scene or give them a complete idea about what happened.

- Play into the emotions . When telling a story, you want more information than the bare minimum. You want your reader to get the emotion of the story. That requires a little bit more work to accomplish.

- Focus. A summary of one story can lead to another can lead to another. Think about storytellers that you know that go off on a tangent. They never seem to finish one story without telling 100 others!

To wrap up (and to demonstrate everything I just talked about), let’s summarize this post into its most essential parts:

A summary is a great way to quickly give your audience the information they need to understand the topic you are discussing without having to know every detail.

How you write a summary is different depending on what type of summary you are doing:

- An academic summary usually gets to the heart of an article, book, or journal, and it should highlight the main points in your own words. How long it should be depends on the type of assignment it is.

- A professional summary highlights you and your professional, academic, and volunteer history. It shows people in your professional network who you are and why they should hire you, work with you, use your talents, etc.

Being able to tell a good story is another form of summary. You want to tell engaging anecdotes and facts without boring your listeners. This is a skill that is developed over time.

Take your writing to the next level:

20 Editing Tips from Professional Writers

Whether you are writing a novel, essay, article, or email, good writing is an essential part of communicating your ideas., this guide contains the 20 most important writing tips and techniques from a wide range of professional writers..

Be confident about grammar

Check every email, essay, or story for grammar mistakes. Fix them before you press send.

Ashley Shaw

Ashley Shaw is a former editor and marketer/current PhD student and teacher. When she isn't studying con artists for her dissertation, she's thinking of new ways to help college students better understand and love the writing process. You can follow her on Twitter, or, if you prefer animal accounts, follow her rabbits, Audrey Hopbun and Fredra StaHare, on Instagram.

Get started with ProWritingAid

Drop us a line or let's stay in touch via:

How to Write a Summary of an Article: Brevity in Brilliance

Table of contents

- 1 What Is an Article Summary?

- 2 Difference Between Abstract and Research Summary Writing

- 3.1 Preparing for Summarizing

- 3.2 Identifying Main Ideas

- 3.3 Writing The Summary

- 4.1 Introduction

- 4.2 Methods

- 4.3 Results

- 4.4 Discussion

- 4.5.1 Structure Types

- 5 Summary Writing Tips and Best Practices

- 6 Common Mistakes to Avoid

- 7 Examples of Article Summaries

Writing a review or a critique is often more difficult than it seems, so students and writers alike are often wondering about how to summarize an article. We know how challenging a task this can be, so this guide will give you a clear perspective and the main points on how to write a summary of an article.

Here’s a brief overview of the main points the article will cover before we start:

- The essence of an article summary and how to approach writing it;

- Three main steps for a successful research summary;

- Tips and strategies for outlining the main idea;

- Examples of good and bad short summaries for inspiration;

- Common mistakes to avoid when writing a research article summary.

The steps outlined in this post will help you summarize an article in your own words without sacrificing the original text message and ideas.

What Is an Article Summary?

An article summary is a concise and condensed version of a longer piece of writing, often an article, research paper, or news report. Its purpose is to capture the main ideas, crucial points, and key arguments found in the original text, providing a brief and easily understandable overview.

These summaries are composed in the author’s own words, distilling the essential information to help readers quickly grasp the content without having to read the entire article. They serve as a helpful tool to offer a snapshot of the most important aspects of the content, making it simpler for readers to decide whether they wish to delve into the complete article.

A common goal of academic summary writing is to improve critical thinking skills , and they serve as great practice for academic writers to improve their own writing skills. There are several main goals of writing a synopsis of an article:

- This paper’s main goal is to provide a comprehensive yet brief descriptive comment on a particular article, telling your readers about the author’s topic sentence and important points in his work and the key points of it.

- It serves to outline a laconic reader’s perspective on the paper while keeping the main point.

- Identifies all the crucial segments from each of the paper’s sections.

A proper article summary can help do your college essays the right way because it provides a great, concise view of the source article. Especially if you are often facing writing tasks like academic papers, knowing how to write a good synopsis can upgrade your writing skills.

Difference Between Abstract and Research Summary Writing

Things get confusing when someone wants to define their place and purpose inside the text. To be more precise, the abstract appears first in the academic article, whereas the summary appears last.

Many students cannot distinguish between a summary and an abstract of a research paper. While these have certain similarities, they are not the same. Therefore, you must be aware of the subtleties before beginning a research article.

On the one hand, both components have a limited scope. Their goal is to provide a thorough literature assessment of the research paper’s main ideas. When you write a research summary, focus on your topic, methods, and findings.

Below you can find more differences between the abstract and research article summary for your project:

- Abstracts provide a succinct synopsis of your work and showcase your writing style.

- Abstracts lay out the background information and clarify the primary hypothesis thesis statement, while the summary emphasizes your research methodology, highlighting the important elements.

Finally, you must submit the abstract before actual publication. On the other hand, article summaries come with the finished piece of paper.

Steps to Write a Summary for an Article

In the world of effective communication, the skill of crafting short yet informative summaries is invaluable. Whether you’re a student dealing with academic articles, a professional simplifying complex reports, or simply someone looking to grasp the essence of an interesting read, mastering the art of summarization is crucial. This summarizing guidelines will lead you through the steps to write a compelling piece.

These steps will empower you to extract core ideas and key takeaways, making it easier to understand and share information efficiently.

Preparing for Summarizing

Before you start writing your summary of the article, you’ll have to read the piece a few times first as a base for further understanding. It’s recommended that you read the paper without taking any notes first because this gives you some room to create your own perspective of the work.

After the first reading, you should be able to tell the author’s perspective and the type of audience they are focusing on. Subsequently, you should get ready for the second read with a paper to write notes on as you get into the arguments of the post.

Identifying Main Ideas

As you come to the second read of the article, you should focus on the thesis statement, main ideas, and important details laid out in the piece. If you look at the headings and sections individually, you should be able to get some material for the summarizing by taking out the crucial events or a topic sentence from each part.

While writing down the main arguments of the post, make sure to ask the five “W” questions. If you think about the “Who” , “Why” , “When” , “Where” , and “What” , you should be able to construct a layout for the summary based on the main ideas.

Writing The Summary

Once you lay down the article’s main ideas and answer the key questions about it, you’ll have an outline for writing. The next move is to keep an eye out on the structure of the summary and use the material in your notes to write your short take on these essential points.

The steps for writing article summaries can be similar to the main steps of article review writing . Therefore, it’s necessary to discuss the structure next so we can set you in the right direction with summary-specific format tips.

Outline Your Research Summary

To summarize research papers, you must be aware of the basic structure. You may know how to cite sources and filter the ideas, but you’ll also have to organize your findings in a concise academic structure.

The following components are essential for a summary paper format:

Introduction

Your research article’s introduction is a brief overview of your work. Outlining important ideas or presenting the state of the topic under research seeks to make the issue easier for your audience to comprehend.

The Methods section includes tests, databases, experiments, surveys, questionnaires, sampling, or statistical analysis, used to conduct a research study. However, for a solid research paper summary example, you should avoid getting bogged down in the specifics and just discuss the tools you utilized and how you conducted your study.

This part the summary of research, presents all of the data you gathered from your investigations and analysis. Therefore, incorporate any information you learned by watching your target and the supporting theories.

This stage requires you to summarize research paper, evaluate the result in light of the pertinent background, and determine how it reacts to the prevailing trends. You need to identify the subject’s advantages and disadvantages once you have provided an explanation using theoretical models. You may also recommend more research in the area.

Use this last part to support or refute your theories in light of the data collection and analysis, though, if your mentor insists on it being in a separate paragraph.

Here’s a research summary example outlining the topic “The Impact of Social Media on Mental Health Among Adolescents”:

I. Introduction.

- Brief overview of the rise of social media.

- Importance of studying its impact on mental health.

- Statement of the problem.

- Purpose of the study.

II. Literature Review.

- Statistics on social media penetration.

- Common platforms and their features.

- Studies supporting a negative and/or a positive impact.

- Gaps and inconsistencies in existing literature.

III. Methodology.

- Quantitative approach.

- Cross-sectional survey.

- Survey instrument details.

- Ethical considerations.

IV. Data Analysis.

- Descriptive statistics.

- Inferential statistics (e.g., regression analysis).

- Tables and figures.

- Key findings.

V. Discussion.

- Correlation between social media usage and mental health.

- Identification of patterns and trends.

- Practical implications for parents, educators, and policymakers.

- Suggestions for future research.

VI. Conclusion.

- Summary of key findings.

- Final remarks on the study’s contribution to the field.

The given research article summary example depicts how the text can be structured in a laconic and effective way.

Structure Types

So, now you can see the best practices and structure types for writing both empirical and argumentative summaries. The only thing left to discuss is to go through our example outlined above and divide its structure into distinctive parts, which you could use when writing your own summary.

The best way to start is by mentioning the title and the author of the article. It’s best to keep it straightforward: “ In “Who Will Be In Cyberspace”, author Langdon Winner takes a philosophical approach…”

The next part is critical for writing a good summary since you’ll want to captivate the reader with a short and concise one-point thesis. If you look at our example, you’ll see that the first sentence or two contains the main point, along with the title and the author’s name.

So, that’s an easy way to get straight to the point while also sounding professional, and this works for all the essay structure types. You should briefly point out the main supportive points as well – “ He supports this through the claims that people working in the information industry should be more careful about newly developed technologies…”

The key is to keep it neutral and not overcomplicate things with supportive claims. Try to make them as precise as possible and provide examples that directly support the main thesis.

Unless it’s a scientific article summary where you are requested to provide your take as a researcher, it’s also best to avoid using personal opinions. You can conclude the summary by once again mentioning the main thought of the article, and this time you can make the connection between the main thesis and supporting points to wrap up.

Summary Writing Tips and Best Practices

The way in which you’ll approach writing a summary depends on the type and topic of the original article, but there are some common points to keep in mind. Whether you are trying to summarize a research article or a journal piece, these tips can help you stay on topic:

- Be concise – The best way to summarize an article quickly is to be straightforward. In practice, it means making it all in a few sentences and no longer than one-fourth of the size of the original article.

- Highlight the study’s most significant findings – For your summary paper, prioritize presenting results that have the most substantial impact or contribute significantly to the field.

- Create a reverse outline – On the other hand, you can also remove the supporting writing to end up with a reverse essay outline and these are the ideas you can expand on through your summary.

- Use your own words – In most cases, a paper summary will be scanned for plagiarism, so you need to make sure you are using your words to express the main point uniquely. This doesn’t mean you have to provide your perspective on the topic. It just means your summary needs to be original.

- Make sure to follow the tone – Summarizing an article means you’ll also need to reflect on the tone of the original piece. To properly summarize an article, you should address the same tone in which the author is addressing the audience.

- Use author tags – Along with the thesis statement, you also have to express the author’s take through author tags. This means you need to state the name of the author and piece title at the beginning, and keep adding these “tags” like “he” or “she” or simply refer to the author by name when expressing their ideas.

- Avoid minor details – To ensure you stay on topic, it’s recommended that you avoid repetition, any minor details, or descriptive elements. Try to keep the focus on key points, main statements and ideas without being carried away in thought.

- Steer clear of interpretations or personal opinions – Avoid personal interpretations or opinions when you write a summary for a research paper. Remember to stick to presenting facts and findings without injecting subjective views.

- Highlight the research context – Focus on explaining to the readers why research is important. Your summary of research paper must not repeat the previous studies. Find the gap in the existing literature it could fill. When you write a summary of a research article, try to help readers understand the significance of your study within the broader academic or practical context. Use a paraphraser if you need a fresh perspective on your writing style.

Common Mistakes to Avoid

Just like it’s important to avoid plagiarism in your text , there are a few other mistakes that commonly occur. The whole point is to summarize article pieces genuinely, with a focus on the author’s argument and writing in your own words.

We’ve often seen college graduates do an article summary and misrepresent the author’s idea or take, so that’s an important piece of advice. You should avoid drifting away from the author’s main idea throughout the summary and keep it precise but not too short.

Quotes shouldn’t be used directly within the piece, and by that, we mean both quotes from the author and quotes from other summaries on the same topic since it would qualify as plagiarism. Finally, you shouldn’t state your opinion unless you are doing a summary of a novel or short story with a specific academic goal of writing from your perspective.

Examples of Article Summaries

While our guide and tips can be used for a variety of different types of written pieces, there are various types of articles. From professional essay writing to informative article synopsis, options can vary.

We will give you an example of a summary of the different article types that you may run upon, so you can see exactly what we mean by those standardized instructions and tips:

The question of how to summarize an article isn’t new to students or even writers with more experience, so we hope this guide will shed some light on the process. The most important piece of advice we can give you is to stay true to the main statement and key points of the article and express the synopsis in your original way to avoid plagiarism.

As for the structure, we are certain you’ll be able to use our examples and layouts for different types of summaries, so make sure to pay extra attention to the structure, quotes, and author tags.

What is a good way to start a summary?

To begin a summary effectively, start by briefly introducing the article’s topic and the main points the author discusses. Capture the reader’s attention with a concise yet engaging opening sentence. Provide context and mention the author’s name and the article’s title. Convey the essence of the article’s content, highlighting its significance or relevance to the reader. This initial context-setting sentence lays the foundation for a clear and engaging summary that draws the reader in.

What is the difference between summarizing and criticizing an article?

Summarizing an article entails condensing its main points objectively and neutrally, presenting the essential information to readers. In contrast, critiquing an article involves a more in-depth evaluation, assessing its strengths and weaknesses, methodology, and overall quality, often including the expression of personal opinions and judgments. Summarization offers a snapshot of the content, while critique delves deeper, offering a comprehensive assessment.

When summarizing a text, focus on these critical questions:

- “What’s the main point?” Find the core message or argument.

- “What supports the main point?” Identify key supporting details and evidence.

- “Who’s the author?” Consider their qualifications and potential bias.

- “Who’s the intended audience?” Understand the expected reader’s knowledge level.

- “Why is it important?” Explain the text’s relevance and significance within its context. Addressing these questions ensures a thorough and effective summary.

How long is a summary and how many paragraphs does a summary have?

A summary typically ranges from one to three paragraphs in length, depending on the complexity and length of the original text. The goal is to concisely present the main points or essence of the source material, usually resulting in a summary that is significantly shorter than the original.

Readers also enjoyed

WHY WAIT? PLACE AN ORDER RIGHT NOW!

Just fill out the form, press the button, and have no worries!

We use cookies to give you the best experience possible. By continuing we’ll assume you board with our cookie policy.

Have a language expert improve your writing

Check your paper for plagiarism in 10 minutes, generate your apa citations for free.

- Knowledge Base

- Using AI tools

- Best Summary Generator | Tools Tested & Reviewed

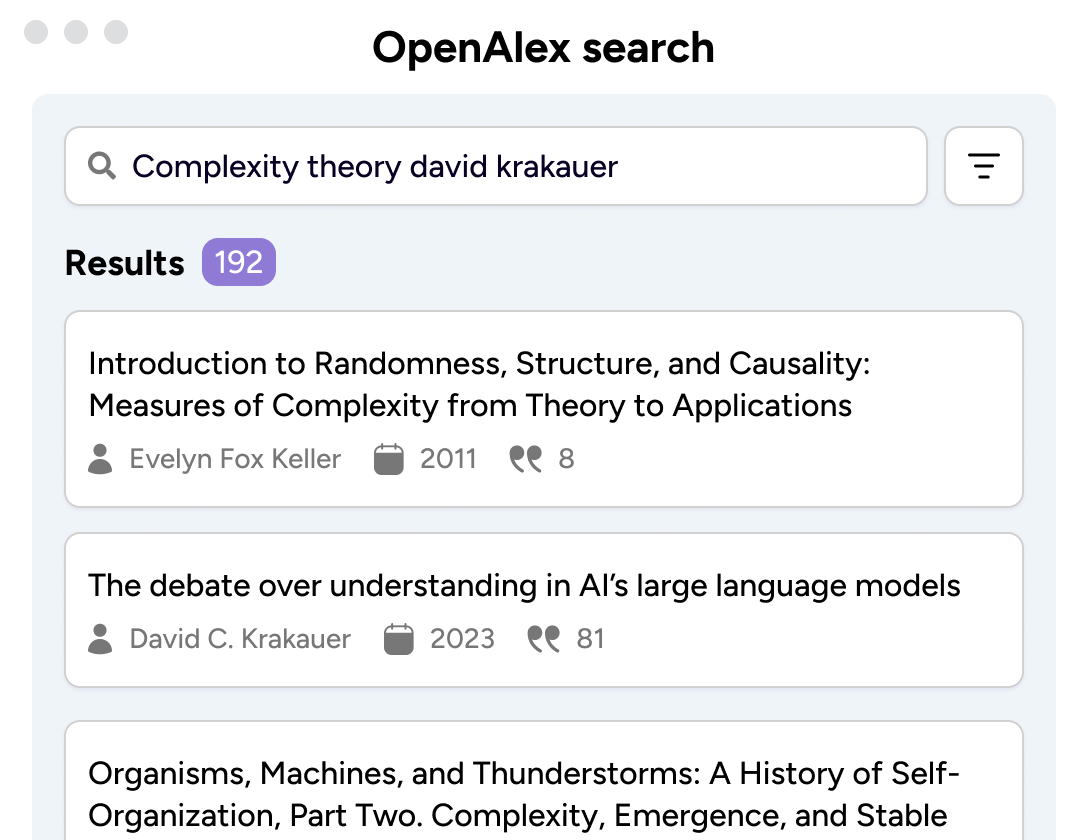

Best Summary Generator | Tools Tested & Reviewed

Published on May 6, 2024 by Jack Caulfield . Revised on May 21, 2024.

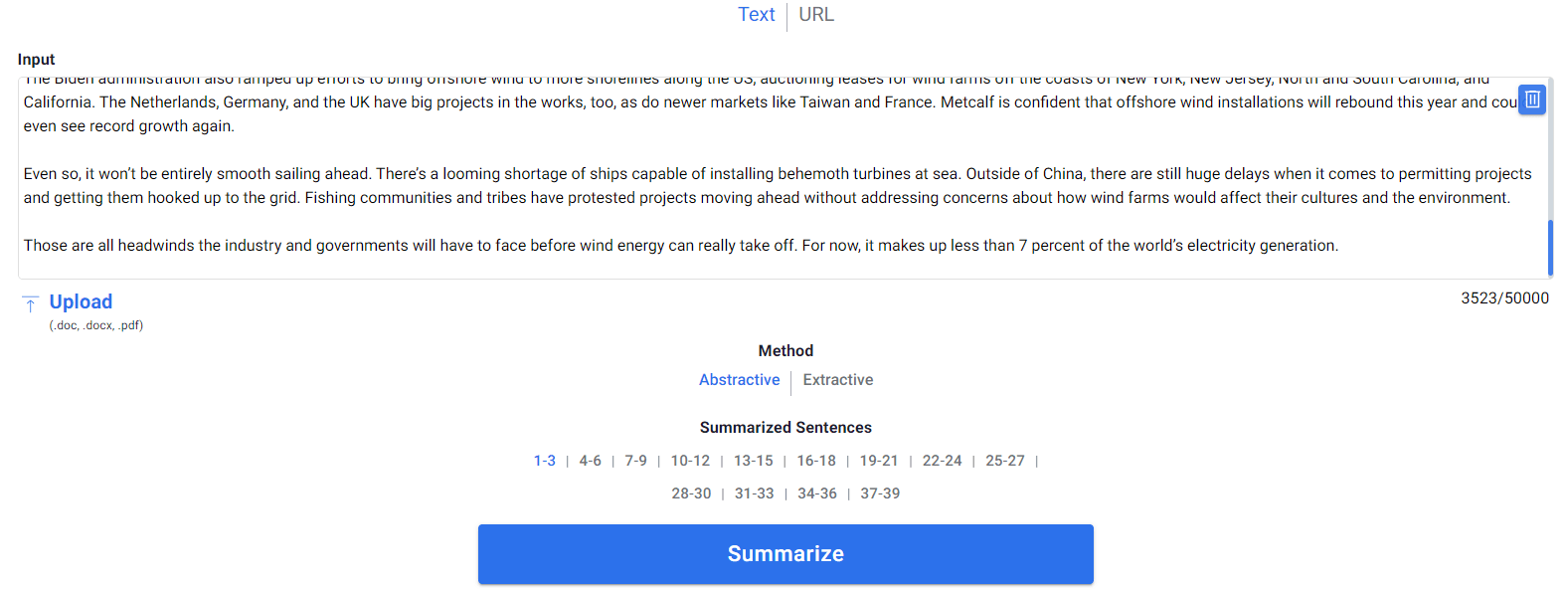

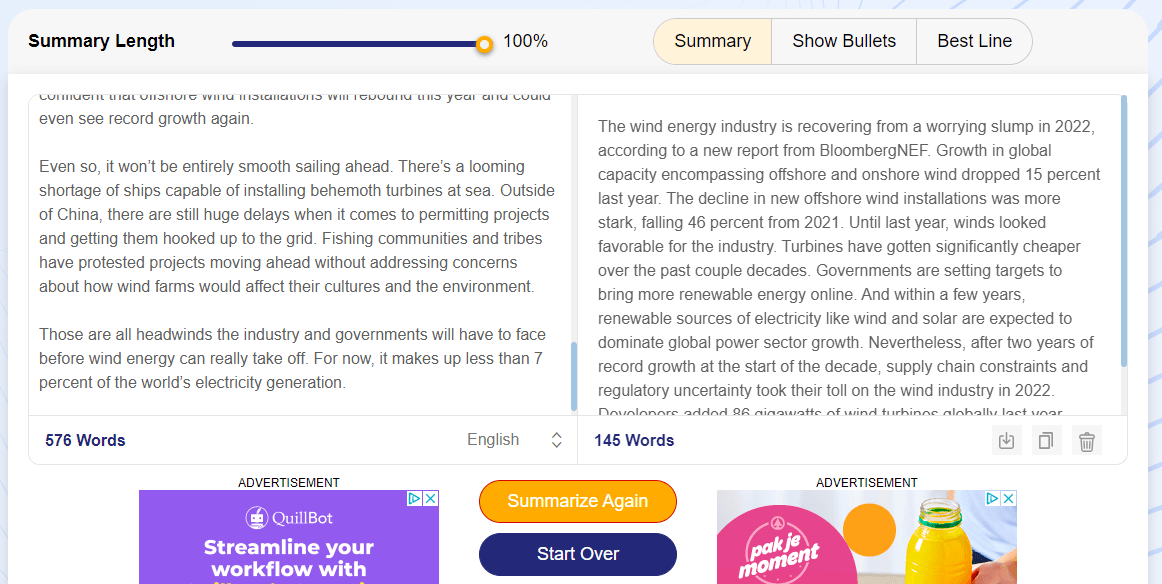

A summary generator (also called a summarizer , summarizing tool , or text summarizer ) is a kind of AI writing tool that automatically generates a short summary of a text. Many tools like this are available online, but what are the best options out there?

To find out, we tested 11 popular summary generators (all available free online, some with a premium version). We used two texts: a short news article and a longer academic journal article. We evaluated tools based on the clarity, accuracy, and concision of the summaries produced.

Our research indicates that the best summarizer available right now is the one offered by QuillBot . You can use it for free to summarize texts of up to 1,200 words—up to 6,000 with a premium subscription.

| Tool | Star rating | Version tested | Premium price (monthly) |

|---|---|---|---|

| Premium | $19.95 | ||

| Premium | $10.57 | ||

| Free | — | ||

| Premium | $39 | ||

| Free | — | ||

| Premium | $4.99 | ||

| Free | — | ||

| Premium | $30 | ||

| Free | — | ||

| Premium | $5 | ||

| Free | — |

Instantly correct all language mistakes in your text

Upload your document to correct all your mistakes in minutes

Table of contents

1. quillbot , 2. resoomer , 3. scribbr , 4. sassbook , 5. paraphraser , 6. tldr this , 7. rephrase , 8. editpad , 9. summarizing tool , 10. smodin , 11. summarizer , research methodology, frequently asked questions about summarizers.

- Produces the clearest and most accurate summaries

- Summarizes text in a creative way, combining sentences

- Can summarize long texts (up to 6,000 words with premium)

- Options for length, format of summary, and keywords to focus on

- Highlights text that was used in the summary

- Summaries occasionally include errors

- Premium costs $19.95 a month (but gets you a variety of other tools)

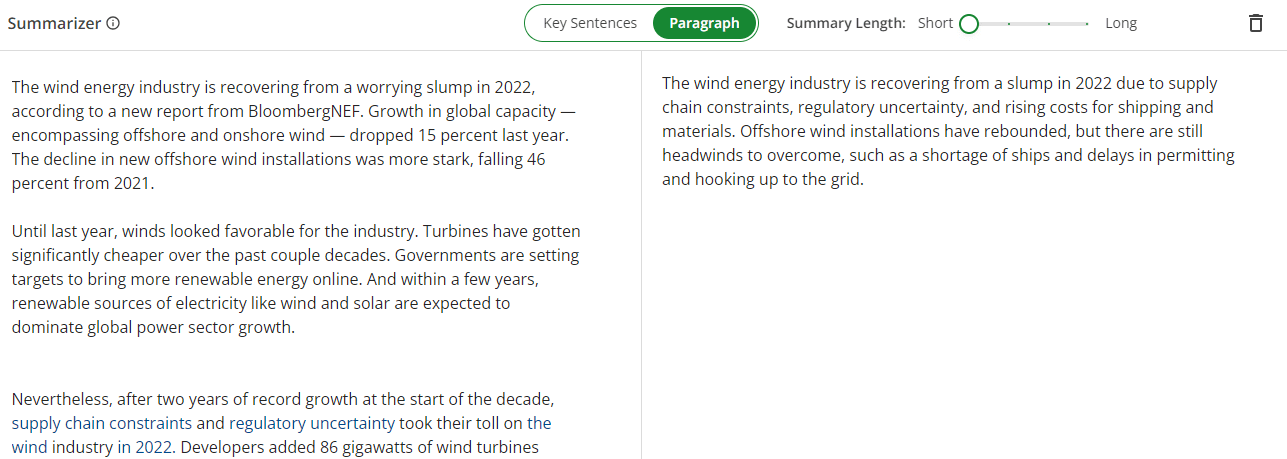

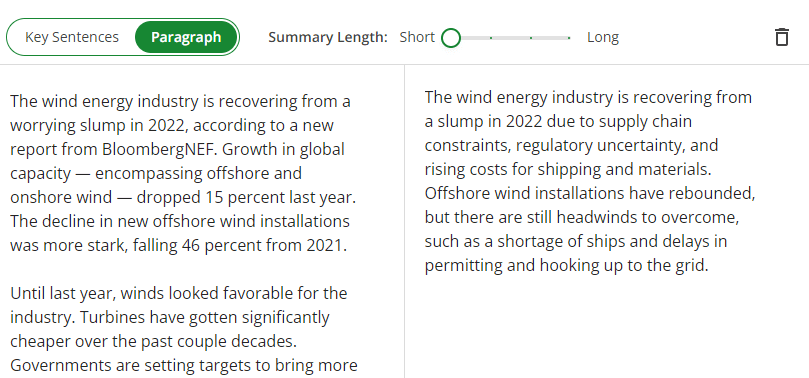

We found QuillBot’s summarizer to be the most effective tool available right now. Its technology is more advanced and creative than any other tool’s. It offers a Key Sentences mode and a Paragraph mode; we found the Paragraph mode to be the most useful.

This mode effectively combined information from multiple sentences to produce a concise and clear summary. In the premium version, it was also able to summarize the longer testing text very effectively. The tool usefully highlights text from your input that was used in the summary, and it allows you to pick keywords to focus on if you want a summary of a specific theme.

We did notice some errors even in this tool: it occasionally misunderstood the meaning of the text or combined sentences in a way that was misleading. On one occasion, it seemed to introduce a typo (“collectiveists”) that wasn’t present in the original text.

Try QuillBot’s summarizer

Check for common mistakes

Use the best grammar checker available to check for common mistakes in your text.

Fix mistakes for free

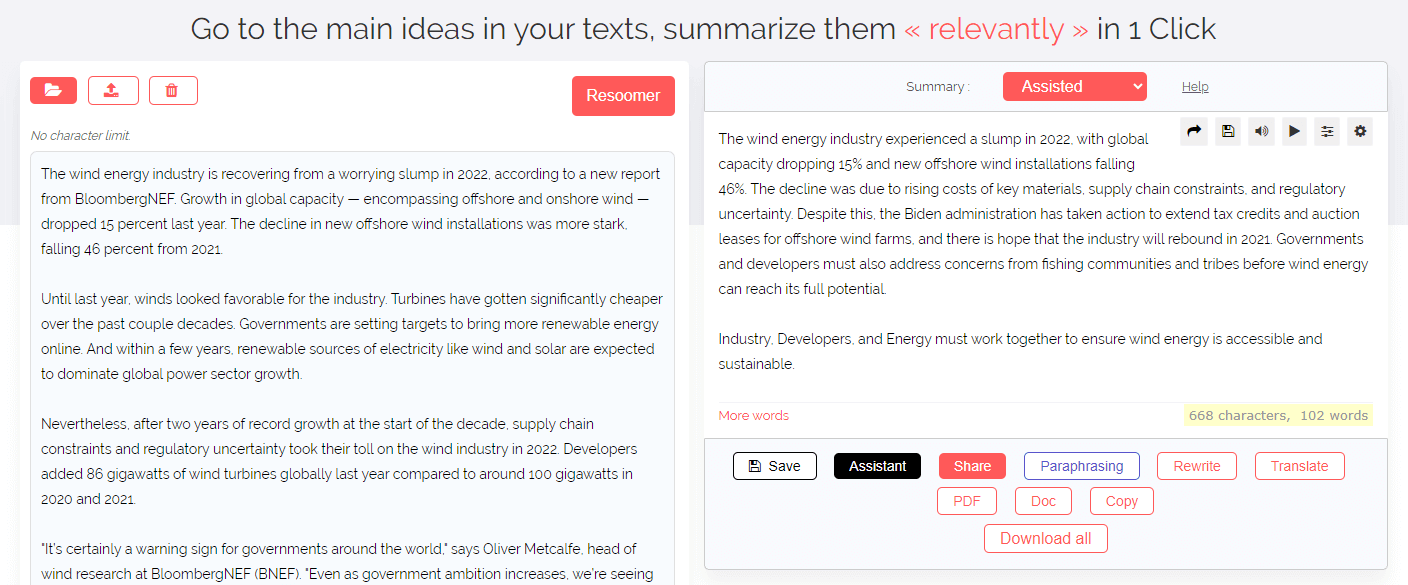

- Relatively clear and accurate summaries

- Summarizes creatively, combining sentences

- A variety of modes and options

- Can summarize long texts (no word limit as far as we could tell)

- Confusing interface with irrelevant features

- Summaries of long texts are long-winded and split across multiple pages

- Only the premium mode ($10.57 a month) is useful

We found that Resoomer, though significantly less powerful than QuillBot, was stronger than other competitors—at least, if you pay for its premium mode. Like QuillBot, it generated creative summaries that combined information from different sentences in a relatively fluent way.

It was able to summarize the long text, but the summary it produced was overly long and spread across multiple pages we had to click between, limiting its usefulness. Resoomer offers a variety of modes, but they are presented in a rather confusing way and all of the free modes are very basic, just picking out sentences from the text rather than generating an original summary.

The mode we found useful was the “Assisted” mode, which is unfortunately only available with a premium subscription. We also didn’t find a use for the unusual “More words” button, which generates a continuation of the summary, seemingly not based on anything in the text.

Try Resoomer

- Produces clear and accurate summaries (powered by QuillBot)

- Can’t summarize long texts (limit of 600 words)

Scribbr’s summarizer is powered by QuillBot technology, which means that it offers the same modes, options, and quality-of-life features such as highlighting text used in the summary. And it produces similarly creative summaries: clear, concise, and fluently written.

The Scribbr summarizer does have one key limitation compared to the QuillBot tool: it cannot handle longer texts, since it has a limit of 600 words per input. The Scribbr tool is free, with no sign-up required and no premium version available right now.

Try Scribbr’s summarizer

- Relatively fluent and creative summaries

- Can summarize long texts (up to 22,000 characters with premium)

- Provides options for length and format of summary

- Very expensive subscription ($39 a month)

- Adds unnecessary verbiage (“Authors say that …”)

- Fairly cluttered interface

- Summaries sometimes misleading or hard to follow

We found that Sassbook provided relatively creative summaries, combining information from different sentences in a similar way to QuillBot or Resoomer. But we found the results less clear than in those tools.

Especially for the longer text, we saw that Sassbook summaries were not very coherently structured, presenting information in a somewhat random order that was hard to follow. We also noticed the tool’s tendency to insert unnecessary text such as “Authors say that …”

Moreover, the tool can only handle longer texts if you pay for a premium subscription, and we found the premium subscription to be unreasonably priced at $39 a month. Perhaps if you find the other tools included in the subscription useful, it could be worth the price. For the summarizer alone, it certainly isn’t worth it, and there are much better and cheaper options out there.

Try Sassbook’s summarizer

Don't submit your assignments before you do this

The academic proofreading tool has been trained on 1000s of academic texts. Making it the most accurate and reliable proofreading tool for students. Free citation check included.

Try for free

- Creative summaries with use of note-style language

- Summaries are usually relatively clear and accurate

- Can’t summarize long texts (no word limit stated, but didn’t work with our long text)

- Summaries are too long, and length controls make little difference

- Note-style summaries may not be what you want

- Some confusing moments in summaries

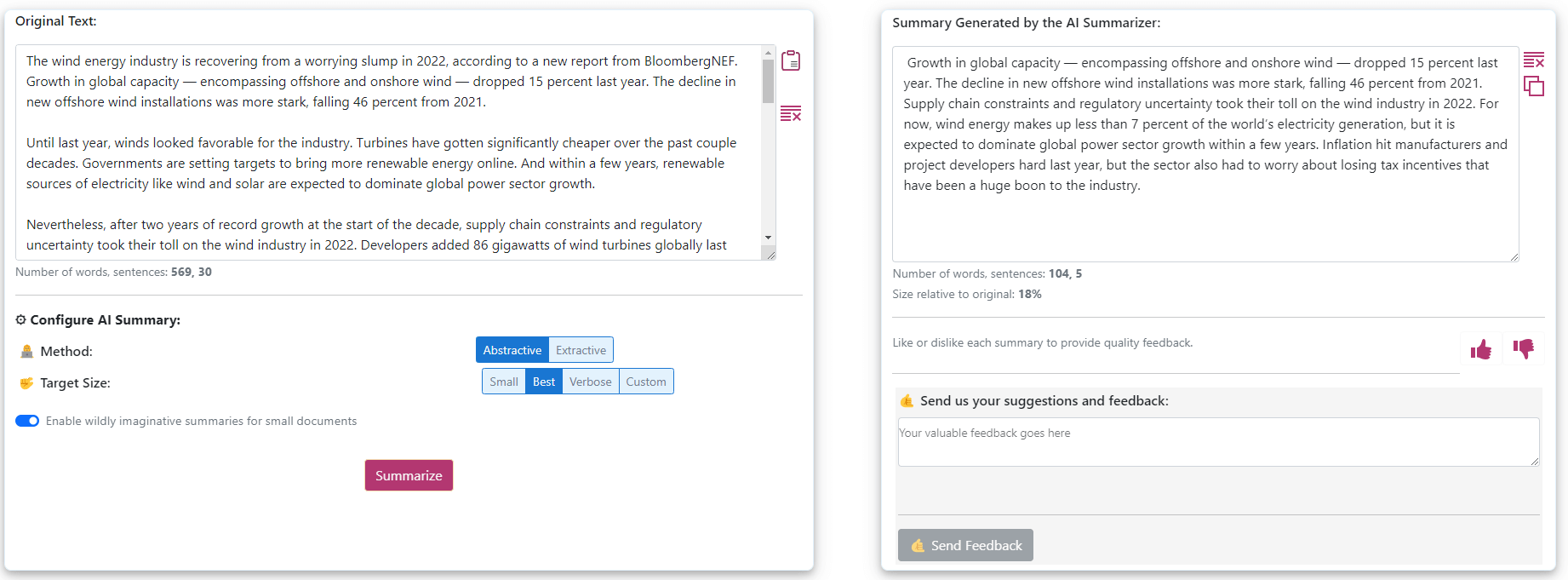

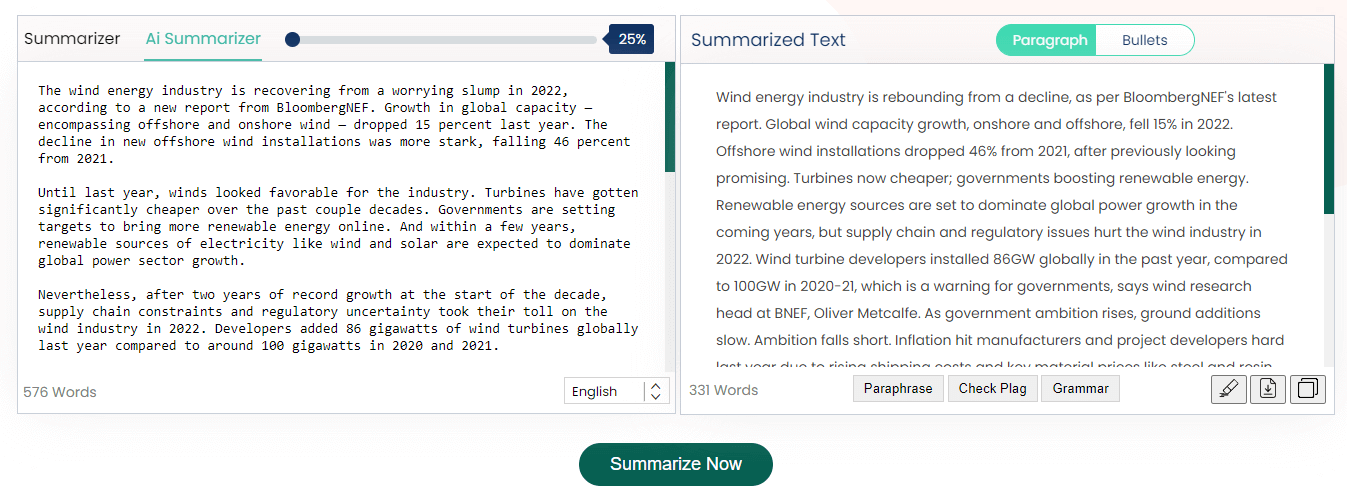

Paraphraser’s summarizer is a free tool with no premium options. It offers two modes, “Summarizer” and “AI Summarizer”; the difference isn’t clearly explained, but in our experience, AI Summarizer produced much better results. Summaries produced in this mode were creative but rough, using note-style language (e.g., omitting articles, using abbreviations) in a way we didn’t see in other tools.

Other options were available but made little difference to the output. Selecting different lengths of summary made very little difference in practice, and the alternative “Bullets” mode presented the same text as the “Paragraph” mode, but in bullet points. Because summary length couldn’t be effectively adjusted, summaries were always longer than we would have liked: over half the length of the full text.

There were also some confusing errors in the output: summaries would often end with a sentence like “Please shorten this text” that clearly shouldn’t be there. And although no word limit is mentioned, the tool didn’t work for our longer text in practice, summarizing only the first 1,000 words.

Try Paraphraser’s summarizer

- 10 free “AI” summaries to start with

- Can summarize the long text

- Just selects a few sentences from the text, producing no original summary

- Costs $4 a month for premium options (100 summaries a month)

- Premium options not noticeably better than free version

- Options (e.g., “short” or “detailed” modes) not noticeably different

The TLDR This tool seems to operate in a very basic way, just taking a few sentences from the text and presenting them in the order in which they originally appeared. It does not combine or paraphrase information in a creative way, even in its premium “AI” mode, which we found produced results nearly identical to those of the free “key sentences” mode.

Like some other tools, it can pick out keywords from the text. But the keywords selected are sometimes not very logical (e.g., “Percent Last Year”), and clicking on them just googles them rather than doing anything in the tool itself. We also did not notice any significant differences between the “short” and “detailed” modes.

Because of its very basic approach and the lack of noticeable differences between its modes, we don’t advise paying for TLDR This.

Try TLDR This

- Can’t summarize the long text (no word limit stated, but didn’t work in practice)

- No options for different lengths or formats of summary

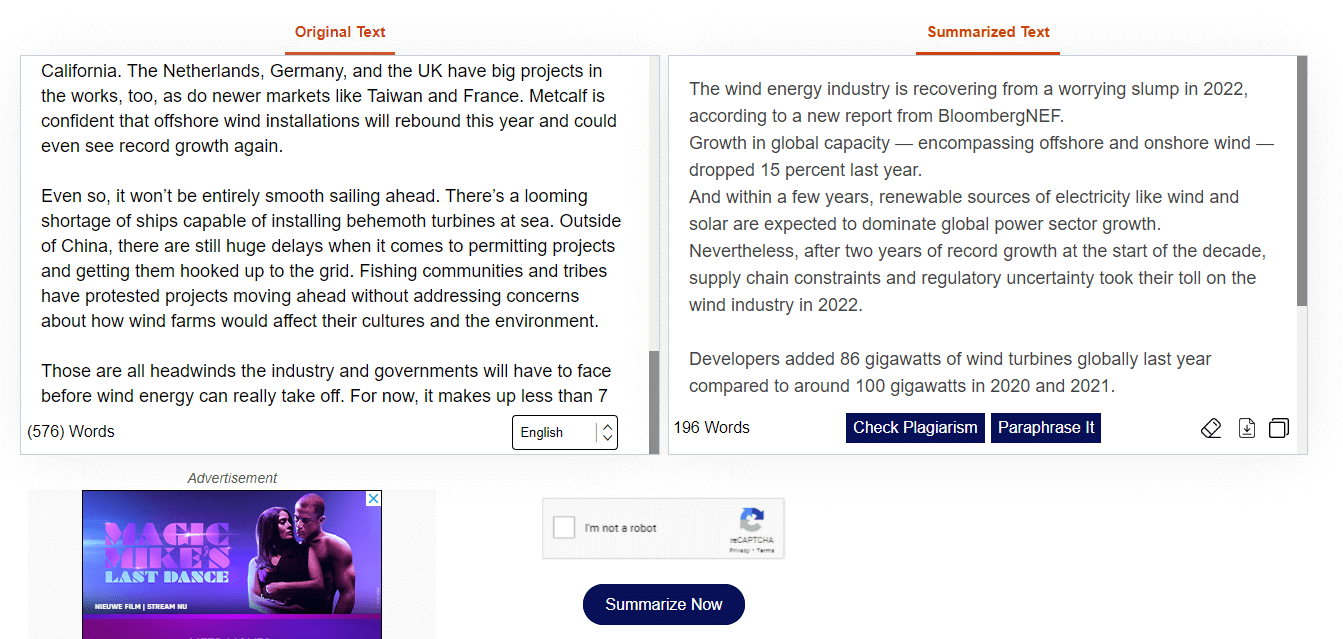

Like TLDR This, Rephrase’s summarizer seemed to just select sentences from the text and present them in the same order again, without any creative recombination of information. In this case, the only way it modified the text was by putting the paragraph breaks at different points.

Rephrase’s tool is free, but, as mentioned, it’s extremely basic. It also lacks any options to change the length or format of the summary. As with other tools like this, the sentences it selects feel very random and often make no sense out of context, meaning the “summary” provided is effectively useless.

No word limit is indicated in the tool, but in practice we found that it could only summarize the first 1,500 words of our longer text. We also found the interface somewhat cluttered with ads.

Try Rephrase’s summarizer

- Can summarize the long text (stated limit of 10,000 words, seems to be 9,000 in reality and much lower in premium mode)

- Premium mode ($30 for a month) is indistinguishable from free mode apart from having a lower word limit and fewer options

- Messy interface

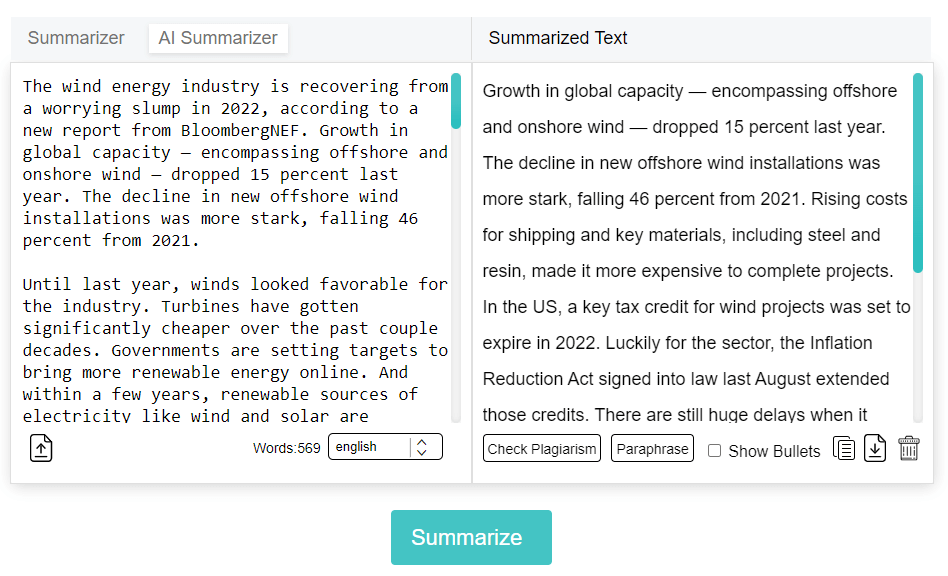

Editpad was one of the worst tools we tested: like Paraphraser’s tool, it offered a “Summarizer” mode (which seems identical to Paraphraser’s tool) and an “AI Summarizer” mode (only available with a $30 premium subscription in the case of Editpad).

But Editpad’s AI Summarizer mode seems worse than the free mode. The results in both modes are very basic, seemingly just selecting some sentences from the text and presenting them in the same order. The AI Summarizer mode differed in only two ways that we noticed: it could not summarize the long text (the free mode could), and it did not have any options regarding the length of the summary.

It’s not clear why Editpad charges money for a tool that seems to be much worse than the (already poor) tool they offer for free, but we strongly advise against paying for it.

Try Editpad’s summarizer

- Just selects sentences from the text and presents them in random order

- Summary of long text is very long

Summarizing Tool is a free tool that doesn’t really produce coherent summaries. Like many other tools, it just chooses some sentences from the text rather than generating an original summary. Even worse, though, it shuffles the sentences into a random order, making the text difficult to follow.

It’s not clear how this kind of “summary” could be useful, since it’s much harder to understand than if the sentences were presented in their original order.

Additionally, while the tool could summarize the long text, its summary in this case was also very long. What you get is essentially an incoherent jumble of ideas that is not even particularly short.

Try Summarizing Tool

- Can choose length of summary

- “Abstractive” and “extractive” modes not noticeably different

- Summary loads slowly

- $5 a month for premium version

Smodin’s summarizer seems to do effectively the same thing as Summarizing Tool: picking sentences from the text and presenting them out of order. In “abstractive” mode, we did notice it sometimes made slight changes to sentences, such as removing a word from the start, but it didn’t seem to properly combine information from different sentences.

One thing it did frequently do was to insert spelling errors (e.g., “religioosity”) and inappropriate synonyms (e.g., “humanity” instead of “personality”) into the text, which seems strange considering how little it otherwise changed in each sentence. In combination with the random order of the sentences, this results in a highly incoherent “summary.”

Paying $5 a month for the premium version raises the character limit from 30,000 to 50,000 and removes the daily limit of 30 entries. Given the poor quality of the tool, we don’t recommend paying.

Try Smodin’s summarizer

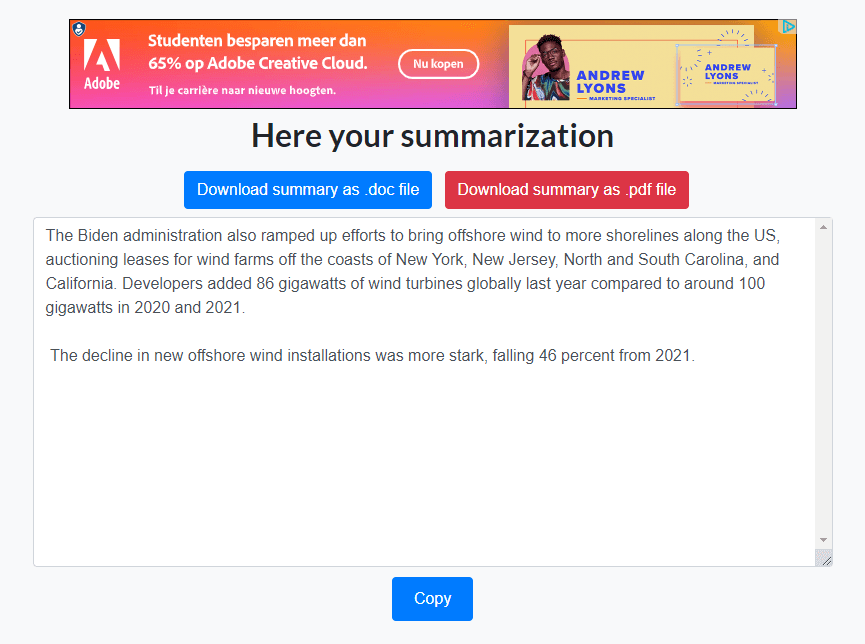

- Just gives you the same text back, but cut off halfway through—not a summary at all

- Unclear interface (100% length is actually the shortest)

- Not clear what the “Best Line” mode is for

Summarizer’s tool performed the worst out of those we tested. All it does is present the same text back to you, but cut off at a certain point (depending on the length of summary you select). No changes are made to any of the sentences.

Essentially, it’s a tool that deletes all but the first paragraph of a text for you. This is quite easy to do yourself with the backspace key, and it’s not likely to result in a “summary” of the text.

Try Summarizer

For our comparison, we selected 11 summarizing tools that show up prominently in search results. All the tools we tested can be used for free, but several of them have premium versions that you can use if you pay for a subscription. We tested the premium versions when available.

To compare the capabilities of the different tools, we used two testing texts, which are linked below:

- A short online news article (around 575 words)

- A longer academic journal article (around 3,500 words)

In each case, we pasted the entire main text of the article into the summarizer, leaving out things like footnotes, the article title, and details about the authors.

To judge the usefulness of the summaries generated, we looked at three qualitative factors:

- Concision: Did the tool effectively condense the text into a quick summary?

- Clarity: Is the summary easy to understand, or are sentences sometimes confusingly phrased or out of context?

- Accuracy: Does it correctly express the key points of the text? Are any important details left out or stated incorrectly?

In the individual reviews, we also take into account details like user-friendliness, pricing, and limitations such as being unable to summarize the longer text.

Our research into the best summary generators (aka summarizers or summarizing tools) found that the best summarizer available is the one offered by QuillBot.

While many summarizers just pick out some sentences from the text, QuillBot generates original summaries that are creative, clear, accurate, and concise. It can summarize texts of up to 1,200 words for free, or up to 6,000 with a premium subscription.

Try the QuillBot summarizer for free

A summary is a short overview of the main points of an article or other source, written entirely in your own words. Want to make your life super easy? Try our free text summarizer today!

An abstract concisely explains all the key points of an academic text such as a thesis , dissertation or journal article. It should summarize the whole text, not just introduce it.

An abstract is a type of summary , but summaries are also written elsewhere in academic writing . For example, you might summarize a source in a paper , in a literature review , or as a standalone assignment.

All can be done within seconds with our free text summarizer .

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Caulfield, J. (2024, May 21). Best Summary Generator | Tools Tested & Reviewed. Scribbr. Retrieved September 9, 2024, from https://www.scribbr.com/ai-tools/best-summarizer/

Is this article helpful?

Jack Caulfield

Other students also liked, 10 best free grammar checkers | tested & reviewed, best paraphrasing tool | free & premium tools compared, best ai detector | free & premium tools tested, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

Summary: Using it Wisely

What this handout is about.

Knowing how to summarize something you have read, seen, or heard is a valuable skill, one you have probably used in many writing assignments. It is important, though, to recognize when you must go beyond describing, explaining, and restating texts and offer a more complex analysis. This handout will help you distinguish between summary and analysis and avoid inappropriate summary in your academic writing.

Is summary a bad thing?

Not necessarily. But it’s important that your keep your assignment and your audience in mind as you write. If your assignment requires an argument with a thesis statement and supporting evidence—as many academic writing assignments do—then you should limit the amount of summary in your paper. You might use summary to provide background, set the stage, or illustrate supporting evidence, but keep it very brief: a few sentences should do the trick. Most of your paper should focus on your argument. (Our handout on argument will help you construct a good one.)

Writing a summary of what you know about your topic before you start drafting your actual paper can sometimes be helpful. If you are unfamiliar with the material you’re analyzing, you may need to summarize what you’ve read in order to understand your reading and get your thoughts in order. Once you figure out what you know about a subject, it’s easier to decide what you want to argue.

You may also want to try some other pre-writing activities that can help you develop your own analysis. Outlining, freewriting, and mapping make it easier to get your thoughts on the page. (Check out our handout on brainstorming for some suggested techniques.)

Why is it so tempting to stick with summary and skip analysis?

Many writers rely too heavily on summary because it is what they can most easily write. If you’re stalled by a difficult writing prompt, summarizing the plot of The Great Gatsby may be more appealing than staring at the computer for three hours and wondering what to say about F. Scott Fitzgerald’s use of color symbolism. After all, the plot is usually the easiest part of a work to understand. Something similar can happen even when what you are writing about has no plot: if you don’t really understand an author’s argument, it might seem easiest to just repeat what he or she said.

To write a more analytical paper, you may need to review the text or film you are writing about, with a focus on the elements that are relevant to your thesis. If possible, carefully consider your writing assignment before reading, viewing, or listening to the material about which you’ll be writing so that your encounter with the material will be more purposeful. (We offer a handout on reading towards writing .)

How do I know if I’m summarizing?

As you read through your essay, ask yourself the following questions:

- Am I stating something that would be obvious to a reader or viewer?

- Does my essay move through the plot, history, or author’s argument in chronological order, or in the exact same order the author used?

- Am I simply describing what happens, where it happens, or whom it happens to?

A “yes” to any of these questions may be a sign that you are summarizing. If you answer yes to the questions below, though, it is a sign that your paper may have more analysis (which is usually a good thing):

- Am I making an original argument about the text?

- Have I arranged my evidence around my own points, rather than just following the author’s or plot’s order?

- Am I explaining why or how an aspect of the text is significant?

Certain phrases are warning signs of summary. Keep an eye out for these:

- “[This essay] is about…”

- “[This book] is the story of…”

- “[This author] writes about…”

- “[This movie] is set in…”

Here’s an example of an introductory paragraph containing unnecessary summary. Sentences that summarize are in italics:

The Great Gatsby is the story of a mysterious millionaire, Jay Gatsby, who lives alone on an island in New York. F. Scott Fitzgerald wrote the book, but the narrator is Nick Carraway. Nick is Gatsby’s neighbor, and he chronicles the story of Gatsby and his circle of friends, beginning with his introduction to the strange man and ending with Gatsby’s tragic death. In the story, Nick describes his environment through various colors, including green, white, and grey. Whereas white and grey symbolize false purity and decay respectively, the color green offers a symbol of hope.

Here’s how you might change the paragraph to make it a more effective introduction:

In The Great Gatsby, F. Scott Fitzgerald provides readers with detailed descriptions of the area surrounding East Egg, New York. In fact, Nick Carraway’s narration describes the setting with as much detail as the characters in the book. Nick’s description of the colors in his environment presents the book’s themes, symbolizing significant aspects of the post-World War I era. Whereas white and grey symbolize the false purity and decay of the 1920s, the color green offers a symbol of hope.

This version of the paragraph mentions the book’s title, author, setting, and narrator so that the reader is reminded of the text. And that sounds a lot like summary—but the paragraph quickly moves on to the writer’s own main topic: the setting and its relationship to the main themes of the book. The paragraph then closes with the writer’s specific thesis about the symbolism of white, grey, and green.

How do I write more analytically?

Analysis requires breaking something—like a story, poem, play, theory, or argument—into parts so you can understand how those parts work together to make the whole. Ideally, you should begin to analyze a work as you read or view it instead of waiting until after you’re done—it may help you to jot down some notes as you read. Your notes can be about major themes or ideas you notice, as well as anything that intrigues, puzzles, excites, or irritates you. Remember, analytic writing goes beyond the obvious to discuss questions of how and why—so ask yourself those questions as you read.

The St. Martin’s Handbook (the bulleted material below is quoted from p. 38 of the fifth edition) encourages readers to take the following steps in order to analyze a text:

- Identify evidence that supports or illustrates the main point or theme as well as anything that seems to contradict it.

- Consider the relationship between the words and the visuals in the work. Are they well integrated, or are they sometimes at odds with one another? What functions do the visuals serve? To capture attention? To provide more detailed information or illustration? To appeal to readers’ emotions?

- Decide whether the sources used are trustworthy.

- Identify the work’s underlying assumptions about the subject, as well as any biases it reveals.

Once you have written a draft, some questions you might want to ask yourself about your writing are “What’s my point?” or “What am I arguing in this paper?” If you can’t answer these questions, then you haven’t gone beyond summarizing. You may also want to think about how much of your writing comes from your own ideas or arguments. If you’re only reporting someone else’s ideas, you probably aren’t offering an analysis.

What strategies can help me avoid excessive summary?

- Read the assignment (the prompt) as soon as you get it. Make sure to reread it before you start writing. Go back to your assignment often while you write. (Check out our handout on reading assignments ).

- Formulate an argument (including a good thesis) and be sure that your final draft is structured around it, including aspects of the plot, story, history, background, etc. only as evidence for your argument. (You can refer to our handout on constructing thesis statements ).

- Read critically—imagine having a dialogue with the work you are discussing. What parts do you agree with? What parts do you disagree with? What questions do you have about the work? Does it remind you of other works you’ve seen?

- Make sure you have clear topic sentences that make arguments in support of your thesis statement. (Read our handout on paragraph development if you want to work on writing strong paragraphs).

- Use two different highlighters to mark your paper. With one color, highlight areas of summary or description. With the other, highlight areas of analysis. For many college papers, it’s a good idea to have lots of analysis and minimal summary/description.

- Ask yourself: What part of the essay would be obvious to a reader/viewer of the work being discussed? What parts (words, sentences, paragraphs) of the essay could be deleted without loss? In most cases, your paper should focus on points that are essential and that will be interesting to people who have already read or seen the work you are writing about.

But I’m writing a review! Don’t I have to summarize?

That depends. If you’re writing a critique of a piece of literature, a film, or a dramatic performance, you don’t necessarily need to give away much of the plot. The point is to let readers decide whether they want to enjoy it for themselves. If you do summarize, keep your summary brief and to the point.

Instead of telling your readers that the play, book, or film was “boring,” “interesting,” or “really good,” tell them specifically what parts of the work you’re talking about. It’s also important that you go beyond adjectives and explain how the work achieved its effect (how was it interesting?) and why you think the author/director wanted the audience to react a certain way. (We have a special handout on writing reviews that offers more tips.)

If you’re writing a review of an academic book or article, it may be important for you to summarize the main ideas and give an overview of the organization so your readers can decide whether it is relevant to their specific research interests.

If you are unsure how much (if any) summary a particular assignment requires, ask your instructor for guidance.

Works consulted

We consulted these works while writing this handout. This is not a comprehensive list of resources on the handout’s topic, and we encourage you to do your own research to find additional publications. Please do not use this list as a model for the format of your own reference list, as it may not match the citation style you are using. For guidance on formatting citations, please see the UNC Libraries citation tutorial . We revise these tips periodically and welcome feedback.

Barnet, Sylvan. 2015. A Short Guide to Writing about Art , 11th ed. Upper Saddle River, NJ: Prentice Hall.

Corrigan, Timothy. 2014. A Short Guide to Writing About Film , 9th ed. New York: Pearson.

Lunsford, Andrea A. 2015. The St. Martin’s Handbook , 8th ed. Boston: Bedford/St Martin’s.

Zinsser, William. 2001. On Writing Well: The Classic Guide to Writing Nonfiction , 6th ed. New York: Quill.

You may reproduce it for non-commercial use if you use the entire handout and attribute the source: The Writing Center, University of North Carolina at Chapel Hill

Make a Gift

Ivana Vidakovic

Jan 24, 2023

How To Write a Summary of an Article - Guide & Examples

Learn how to summarize an article, where to start, what to include, and how to keep it short and interesting through this practical guideline.

TABLE OF CONTENTS

Have you ever considered why article summaries yield so much attention online?

And why it matters so much to writers?

It would be demoralizing to pour a great deal of effort and enthusiasm into an article only to have it end in a banal, trite manner.

It's like a well-made film with a vague ending.

A poor summary of an article isn't just detrimental to the piece overall, it can also leave you feeling like your precious time has been squandered.

This post will go over some guidelines on how to summarize an article, such as where to start, what to include, and how to keep it short and interesting.

Moreover, we will offer some tried-and-true solutions that can help you speed up the summarizing process.

But before we get into that, let's figure out why we have to summarize articles in the first place.

Why Do We Need to Summarize Articles?

When you need to convey the gist of a lengthy article to someone who still needs to read it, a summary is your best bet.

It allows readers to get the brief of an article quickly without having to read it cover to cover. Your readers can easily remember and retain the main points of an article if they are correctly summarized.